Capcom draws a firm line on AI-generated assets in their games.

Capcom has stepped in to address one of the biggest talking points in gaming right now: generative AI. As players continue to question how much of their favourite titles are shaped by AI, the developer behind Resident Evil, Street Fighter, and Monster Hunter has clarified exactly where it stands, and where it draws the line.

The update comes from Capcom’s latest shareholders’ meeting, with the company publishing its Q&A session on 23 March. Among the questions raised was how the studio is approaching generative AI in game development, an issue that has become increasingly visible across the industry.

Is Capcom using generative AI in its games?

Capcom has confirmed it is not using generative AI to create in-game assets. “Our company will not implement the materials generated by our AI into game content,” it stated.

That said, the company is not turning away from the technology entirely. Capcom explained that it plans to “actively utilise this technology to improve efficiency and productivity” across development, including in graphics, sound, and programming. In other words, AI will stay behind the curtain, supporting developers, rather than replacing them.

How is Capcom using AI to make games?

Capcom’s interest in AI-assisted workflows has been in motion for some time. As previously reported by Game*Spark (spotted by IGN), the company revealed in January 2025 that it had built a prototype “idea generation” system using Google Cloud.

The system is designed to tackle one of the most demanding parts of development: building out detailed game worlds. Technical director Kazuki Abe explained that teams often need to generate “hundreds of thousands of unique ideas” to populate environments, especially in large-scale titles. He noted that this process is both labour-intensive and time-consuming.

Capcom’s AI system reads design documents, including text, images, and spreadsheets, and generates additional ideas based on that data. This allows developers to brainstorm concepts more quickly, while still refining them through human input. Abe added that the tool can produce visual references to help communicate ideas to art directors and artists, who ultimately create the final assets.

The system also enables individual developers to experiment with ideas independently, and Capcom indicated that internal feedback on the tool has been positive.

Generative AI remains a divisive topic across the industry, with studios taking different approaches depending on their priorities and player expectations. Capcom’s stance arrives at a time in which AI-driven tools are under increased scrutiny.

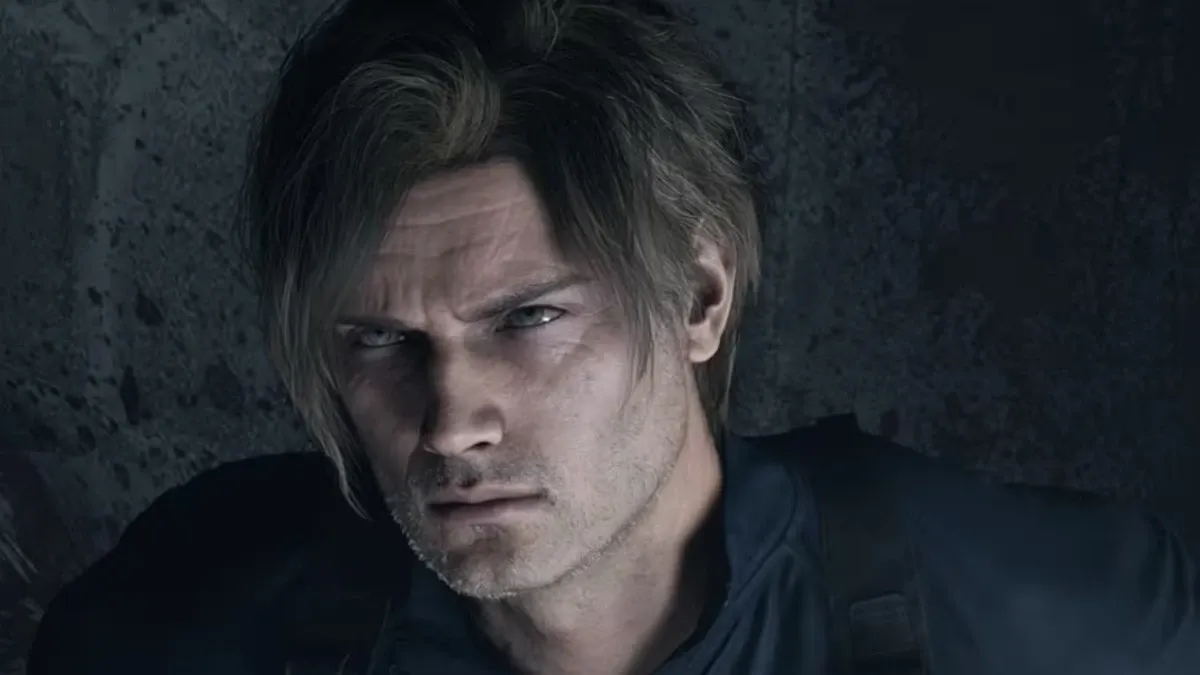

Recent discussions were fuelled by NVIDIA’s DLSS 5 showcase, which featured Resident Evil Requiem. The demo drew criticism from some players over its “photo-realistic” presentation, with members of the gaming community calling it “AI-slop”. NVIDIA later confirmed that DLSS 5 generates new frames using source data, effectively layering AI-rendered images over the original output.